#238 - GPT 5.4 mini, OpenAI Pivot, Mamba 3, Attention Residuals

Our 238th episode with a summary and discussion of last week's big AI news!

Recorded on 03/18/2026

Hosted by Andrey Kurenkov and Jeremie Harris

Feel free to email us your questions and feedback at andreyvkurenkov@gmail.com and/or hello@gladstone.ai

Read out our text newsletter and comment on the podcast at https://lastweekin.ai/

In this episode:

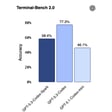

* OpenAI released GPT-5.4 mini and nano with 400k-token context windows, higher per-token prices but claimed token-efficiency gains in Codex; nano is API-only and pitched for high-volume classification/data extraction despite a major price increase.

* Mistral open-sourced the Small 4 model family (MoE, 119B total/6B active) combining reasoning, multimodal, and coding-agent capabilities, and announced Forge to help businesses train or post-train custom models.

* Agent “operating system” competition intensified with Meta’s acquired Manus launching a local Mac agent, Nvidia announcing NeMo/“Open Shell” sandboxed agent runtime, and Nvidia also unveiling DLSS 5 plus major hardware forecasts including Groq LPU integration.

* Business and safety updates included OpenAI shifting focus toward productivity/enterprise amid competition, Microsoft reorganizing Copilot and frontier-model efforts, Meta delaying its next model, China-linked ByteDance deploying large Nvidia clusters abroad, and new safety work on steganography, chain-of-thought faithfulness, fine-tuning defenses, cyber-attack evals, and constitution/spec compliance.

A thank you to our current sponsors:

- Box - visit Box.com/AI to learn more

- ODSC AI - go to odsc.ai/east and use promo code LWAI for an additional 15% off your pass to ODSC AI East 2026.

- Factor - head to factormeals.com/lwai50off and use code lwai50off to get 50 percent off and free breakfast for a year

Timestamps:

- (00:00:10) Intro / Banter

- (00:01:56) News Preview

- Tools & Apps

- (00:02:39) OpenAI ships GPT-5.4 mini and nano, faster and more capable but up to 4x pricier

- (00:08:04) Mistral's new Small 4 model punches above its weight with 128 expert modules

- (00:14:03) Meta's Manus launches 'My Computer' to turn your Mac into an AI agent - 9to5Mac

- (00:17:57) NVIDIA Announces NemoClaw for the OpenClaw Community | NVIDIA Newsroom + Nvidia boosts knowledge work with Open Agent Development Platform

- (00:24:09) DLSS 5 looks like a real-time generative AI filter for video games | The Verge

- (00:26:36)